The Invisible Insidious Hand: How Tech Algorithms Shape Individuals and Society

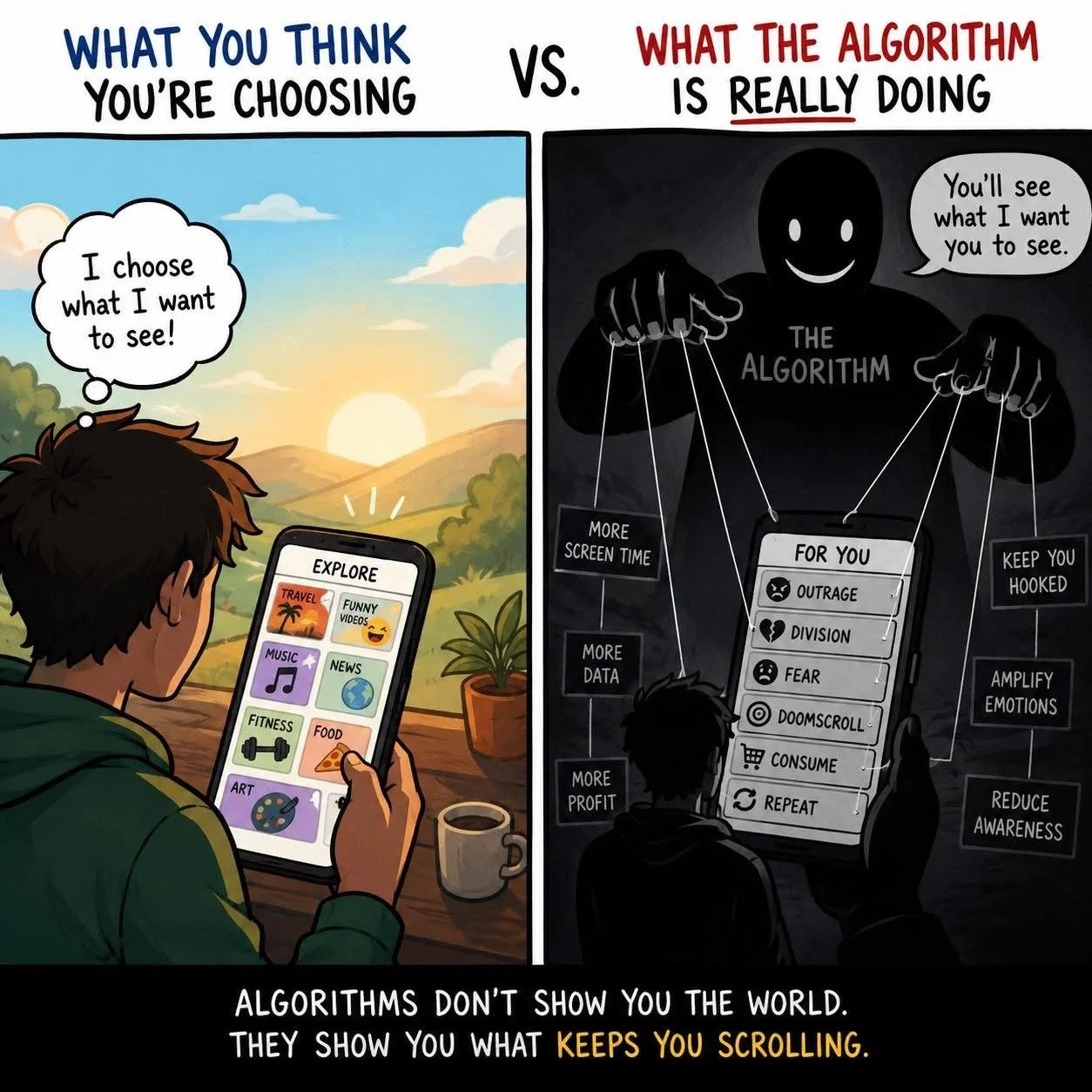

Tech algorithms (algos) have quietly become one of the most powerful forces shaping human experience. We all get trapped by this invisible insidious hand. It warps our experience of reality and shapes our behaviour to perpetuate division, duality, and polarity through hidden agendas, deflection, information overload, cognitive dissonance, and emotional extremes.

Algos operate behind the scenes of nearly every digital interaction. From social media feeds and search engines to financial markets and healthcare systems, algos influence not only what we see, but how we think, behave, and relate to one another.

Algos are complex sets of tech rules designed to sort, recommend, predict, and decide for you based on your online interests. Their impact is both profound and paradoxical: they offer unprecedented convenience and personalization, while simultaneously raising serious concerns about autonomy, bias, and social cohesion.

At the level of the individual, algos act as curators of reality and can have profound impact on everyone including even the most aware and discriminating meditators.

Platforms like social media and streaming services use predictive models to tailor content based on past behavior, preferences, and engagement patterns. This personalization can feel helpful—users discover music they love, news that interests them, and products they might genuinely need. However, this same mechanism can narrow one’s informational world.

By continuously feeding content that aligns with existing beliefs and tastes, algos can reinforce cognitive biases and limit exposure to diverse perspectives. Over time, this creates what is often called a “filter bubble,” where individuals become less aware of alternative viewpoints and more confident in their own.

This has psychological consequences.

Constant algo optimization for engagement often means prioritizing emotionally charged or attention-grabbing content. As a result, users may experience heightened anxiety, comparison, or outrage. The individual is no longer just consuming content—they are being subtly guided toward behaviors that maximize time spent on platforms. In this sense, algos do not merely reflect human preferences; they actively shape them.

On a broader societal level, the effects are even more significant.

Algos influence public discourse by determining which stories trend, which voices are amplified, and which are suppressed. This can distort democratic processes. Misinformation, once a fringe issue, can now spread rapidly when it aligns with engagement-driven algorithmic incentives. Political polarization is often intensified as groups are fed increasingly extreme content that reinforces in-group identity and distrust of others.

Moreover, algo systems are not neutral.

They are built by humans and trained on historical data, which may contain embedded biases. In areas such as hiring, lending, policing, and healthcare, algorithmic decisions can unintentionally perpetuate inequality. For example, if a hiring algo is trained on past company data that favored certain demographics, it may continue to disadvantage others—even without explicit intent. This raises ethical questions about fairness, accountability, and transparency.

Despite these challenges, algos also hold potential for positive transformation. In medicine, they can assist in early disease detection and personalized treatment plans. In education, they can adapt learning to individual needs. In environmental science, they can optimize energy use and model climate patterns.

The issue, therefore, is not the existence of algos, but how they are designed, deployed, and governed.

To navigate this landscape, a more conscious relationship with technology is required.

Individuals can cultivate awareness of how their digital environments are shaped and seek out diverse sources of information.

At the societal level, there is a growing need for regulation, ethical standards, and transparency in algorithmic systems. Designers and engineers must take responsibility for the broader impact of their creations, embedding values such as fairness, accountability, and human well-being into their work.

Ultimately, algo are mirrors and amplifiers of human intention.

They reflect our priorities—efficiency, engagement, profit—but also magnify their consequences.

As their influence continues to grow, the central question becomes not what algos can do, but what they should do.

The answer will define not only the future of technology, but the future of human society itself.

At a practical level, each of us should self-reflect every day and observe how algos impact our mental and emotional health and perception of reality. And adapt accordingly to loosen the grip of the invisible hand.

AI generated image